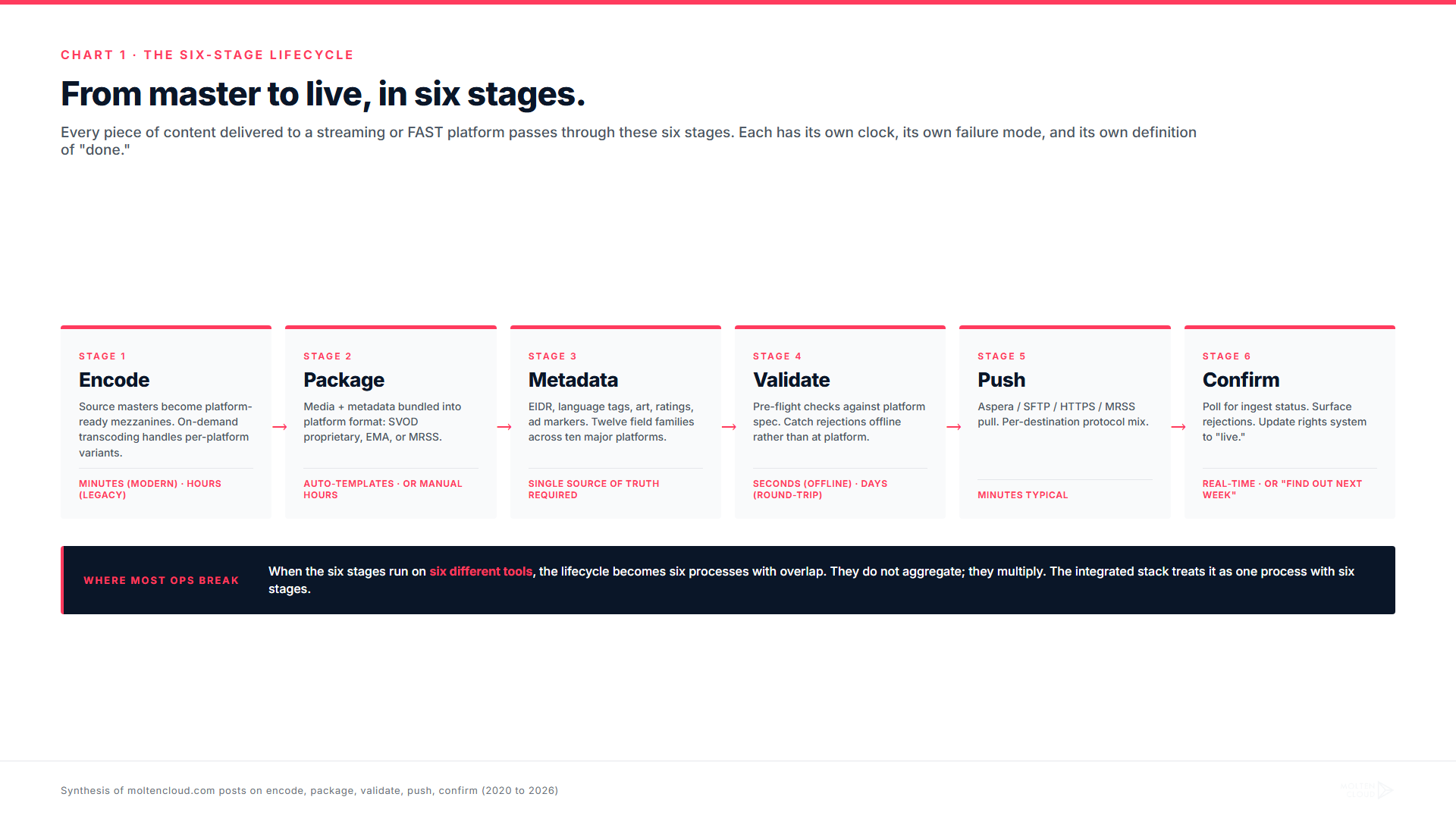

Every piece of content delivered to a streaming or FAST platform passes through six stages between "the master is ready" and "the title is live." Most delivery problems we see in 2026 trace to one of those six stages running in a separate tool, on a separate cadence, with a separate notion of state. This is the playbook for the full lifecycle, and the synthesis of how Molten Cloud has been writing about each stage for the past four years.

If our last post was the platform map, this is the operational map. Same operation, viewed from the inside.

Stage 1Encode

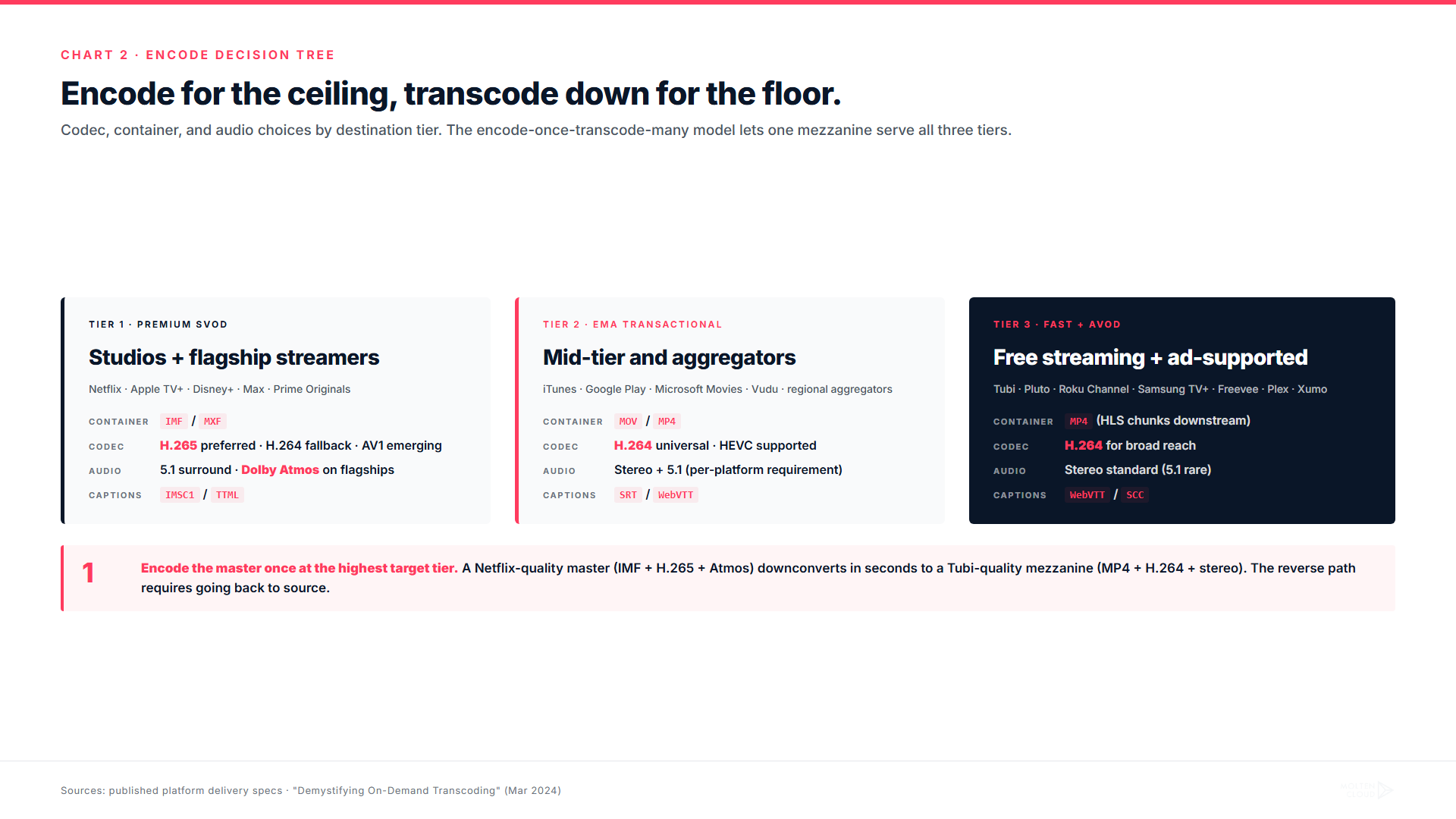

The encode stage starts the moment a master file is delivered (or a new title is acquired) and ends when a platform-ready mezzanine exists. In a 2018 workflow, this meant pre-baking every variant a distributor might eventually need: H.264 720p, H.264 1080p, H.265 1080p, H.265 4K, audio passes for stereo and 5.1, sometimes Atmos. Storage cost was high. Time-to-ready was hours per title. New variants required full re-encoding.

The 2024 model, which we walked through in Demystifying On-Demand Transcoding, is different. Source files (typically ProRes or MOV) get converted to MP4 as a single mezzanine in the preparation phase. The platform-specific variants (different codecs, different bitrates, different container wrappers) are generated on demand a few seconds ahead of where a viewer is watching, with watermarks and DRM applied in real time. Cached fragments handle the second viewer onward. The first encoding phase usually takes minutes depending on length. There is no per-viewer file. Storage cost stays flat.

The operational rule we have learned: encode for the most demanding destination on day one, transcode down for the rest on demand. A title that ships to Netflix as IMF + H.265 + Atmos can be transcoded in seconds to MP4 + H.264 + stereo for a FAST channel. A title that shipped initially to a FAST channel as MP4 + H.264 cannot be retroactively upgraded to a Netflix-quality master without going back to source. Optimize the master for the ceiling, not the floor.

Stage 2Package

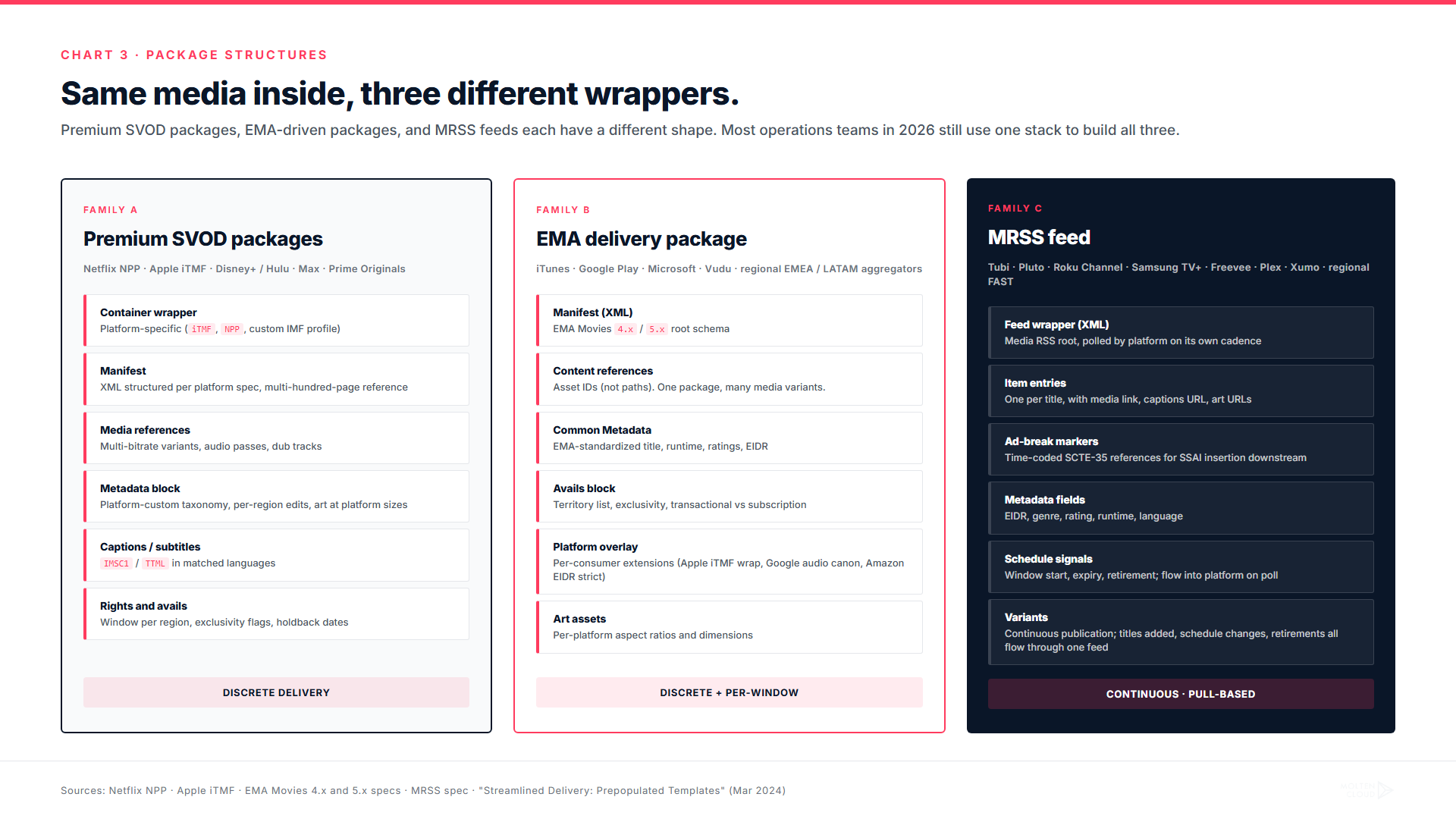

Packaging is where media meets metadata, and where most operations teams in 2026 still spend more time than they should. The package is the discrete unit that a platform ingests: it bundles the media references, the metadata, the rights and avails, the art, the captions, and any platform-specific extensions into a single structured artifact. Three formats matter:

- Premium SVOD packages (Netflix, Apple TV+, Disney+, Max) — proprietary, platform-specific structures. Each platform has a multi-hundred-page delivery specification that updates on its own cadence.

- EMA packages (iTunes, Google Play, Microsoft, in-region transactional aggregators) — the closest thing the industry has to a common standard. EMA Movies 4.x or 5.x, with platform-specific overlays.

- MRSS feeds (Tubi, Pluto, Roku Channel, Samsung TV+, Freevee) — XML feed structure for FAST and AVOD. Continuous publication rather than discrete delivery.

We made the case in Streamlined Delivery: Prepopulated Templates that "each platform has its unique specifications, often subject to change, making the preparation of content packages a labor-intensive task fraught with potential for error." The fix is structural: the package builder reads from the same metadata source the rights system reads from, so platform-specific fields are auto-filled rather than copy-pasted. The reason this matters is not aesthetic. It is the difference between a package that validates first try and one that bounces three times.

Stage 3Metadata

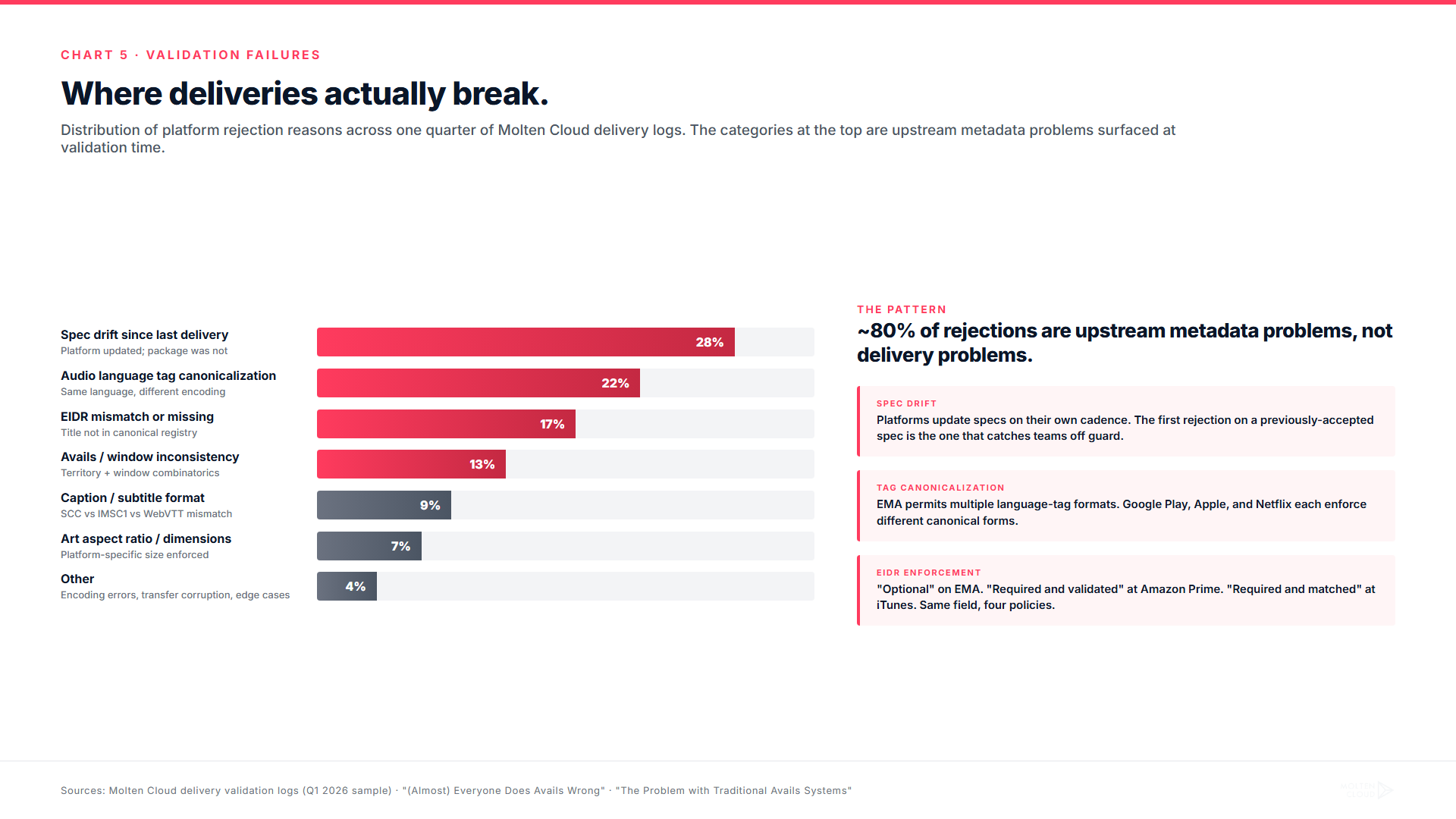

Metadata is the part of delivery operations that quietly causes the most rejections. Every platform demands a slightly different shape: EIDR identifiers (sometimes optional, sometimes required), language tags (often canonicalized differently across consuming validators), ratings boards (MPA in the US, BBFC in the UK, FSK in Germany, plus a long tail of regional ratings), genre taxonomies, art assets at platform-specific aspect ratios and sizes, ad-break markers for AVOD/FAST, captions and subtitles in platform-specific encodings (SRT, SCC, IMSC1, iTT, WebVTT), and synopsis variants in long form, short form, and one-liner.

The chart below shows a small slice of the actual surface area. The full version, which is too dense for inline reading, covers ten platforms and twelve metadata field families. Click the expand button to view it.

The pattern in the data is the takeaway. Required fields are the easy part. Optional-but-strict fields, where the platform technically accepts the package without the field but rejects it on validation if the field is malformed, are where the operational time goes. EIDR is a clean example: "optional" on the EMA spec, "required and validated" at Amazon Prime, "required and matched against canonical title database" at iTunes, "absent and ignored" at most FAST platforms. The same field, four different policies.

Stage 4Validate

Validation is the stage where the packaged delivery gets checked against the platform's spec before it crosses the wire. The checks fall into three buckets: structural validation (does the package conform to the schema), content validation (do the media files match what the manifest claims), and platform-specific validation (does the package satisfy whatever extensions the consuming platform layered on top of the spec). Most operations teams handle the first two reasonably. The third is where most rejections actually originate.

We have written about the upstream of this in two posts. (Almost) Everyone Does Avails Wrong argued that the avails-side validation problem (territory exclusions, window overlaps, holdback violations) is rampant in the industry. The Problem with Traditional Avails Systems made the case that the way most distributors maintain avails (spreadsheet sources of truth, downstream-of-contracts) is structurally incompatible with serving fifty downstream destinations on different cadences. Both of those posts are about the same thing the validation chart shows: the rejection problem at validation time is downstream of an upstream data hygiene problem.

Stage 5Push

Push is the stage of moving the validated package to the platform's ingest endpoint. This is the part that has the most public benchmarks attached to it, because "transfer speed" and "delivery throughput" are the metrics that get pitched in vendor decks. We have written about the speed side in Fast Track Your Content Delivery, the package-assembly side in How to Deliver Packages Before Your Coffee Cools, and the transfer-engine side in Content Transfer: Electrified.

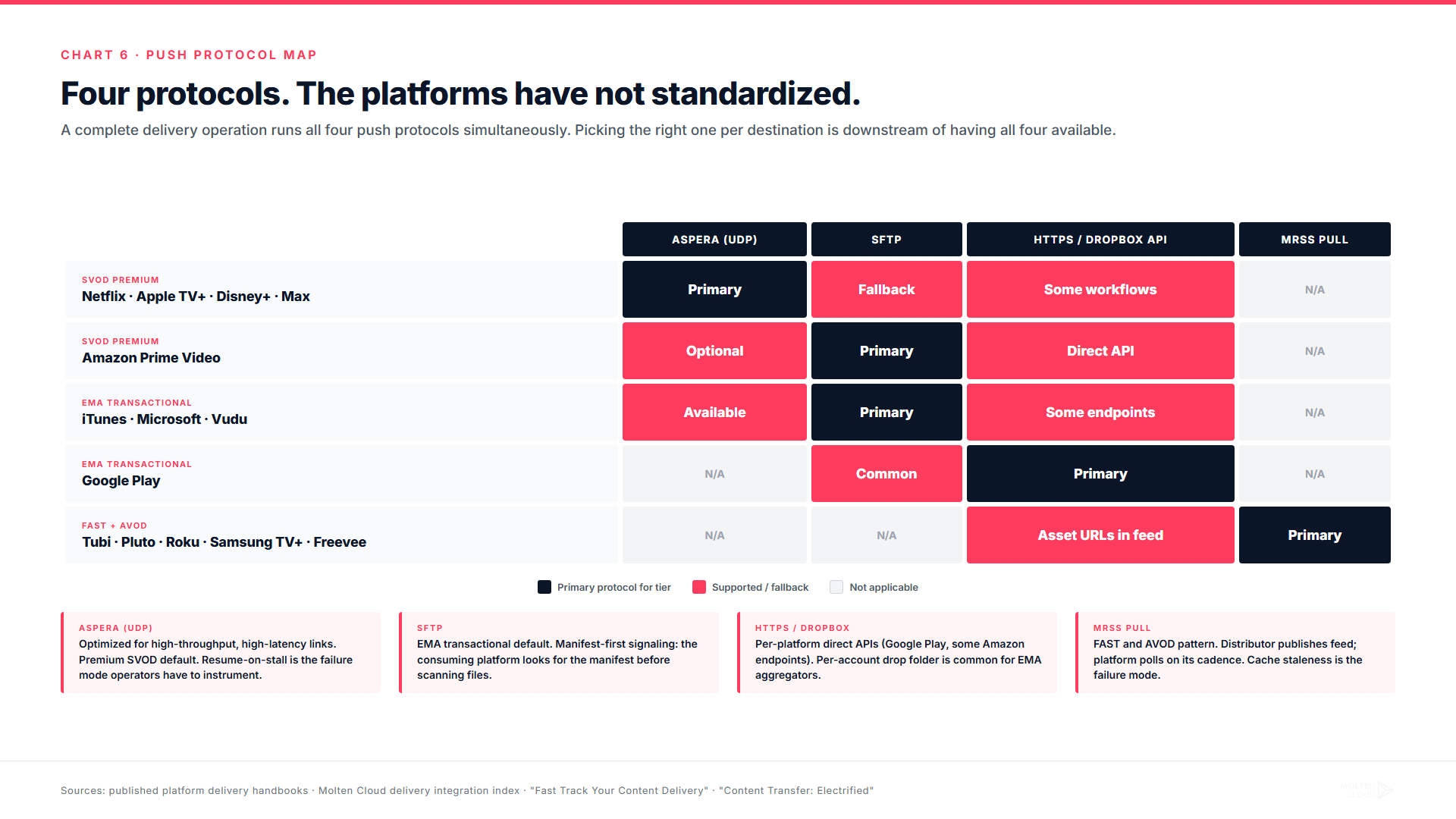

The transport protocol used at this stage varies by destination tier. Premium SVOD platforms typically accept Aspera (UDP-based, optimized for high-throughput, high-latency links) or platform-specific dropbox APIs. EMA-driven platforms typically accept HTTPS or SFTP delivery to a per-account drop endpoint, sometimes with manifest-first signaling. FAST and AVOD platforms run on the MRSS pull model: the distributor publishes the feed, the platform polls it on its own cadence and pulls referenced media on demand.

The operational subtlety the protocol map hides: each of these protocols has its own way of failing. Aspera transfers can stall mid-stream and require resume. SFTP transfers can complete and still be invisible to the consuming platform if the manifest signal was missed. MRSS feeds can publish correctly and still get ignored if the polling platform's cache is stale. The push stage is not "did the bytes move," it is "did the bytes move AND did the platform notice."

Stage 6Confirm

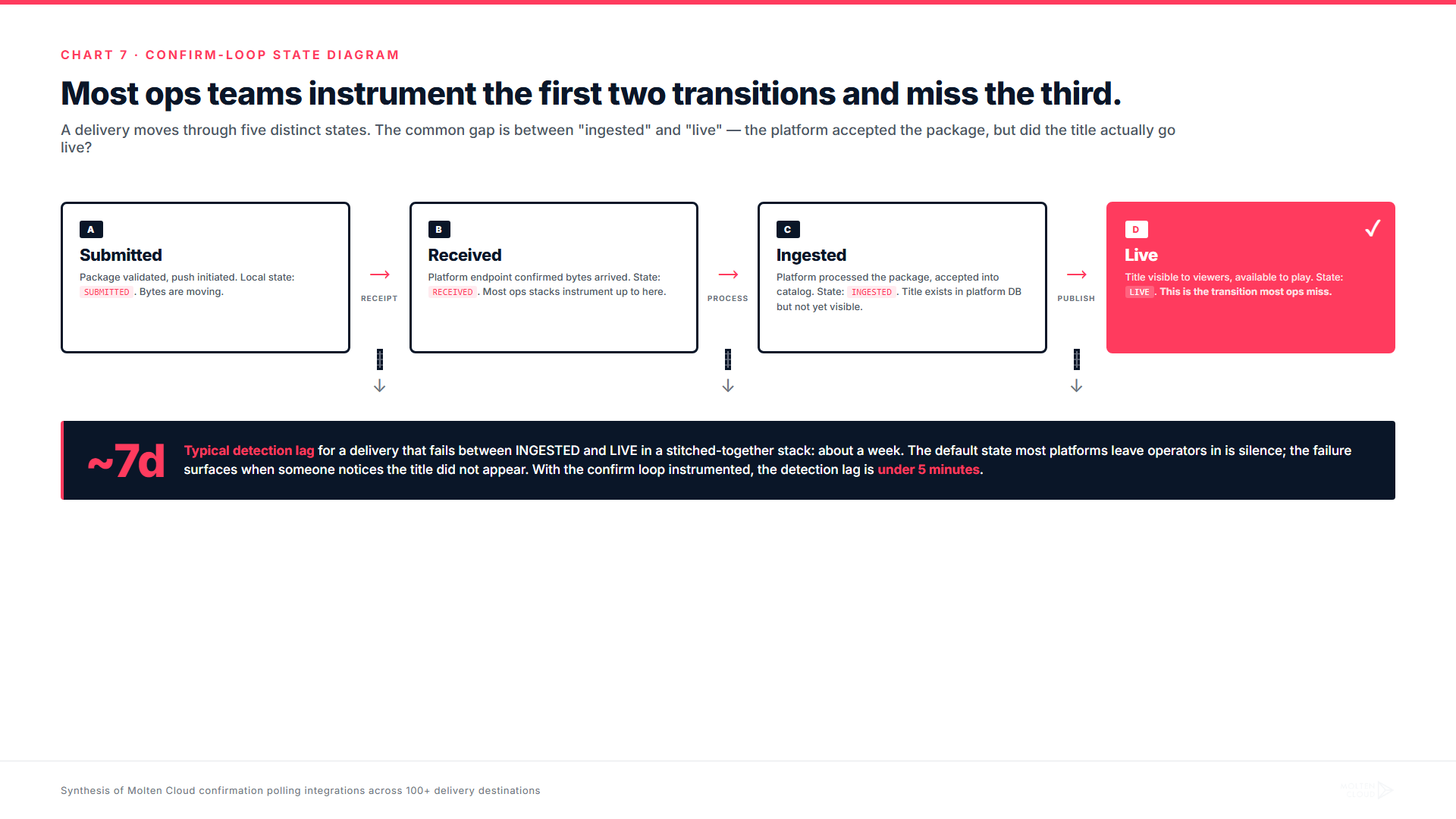

Confirm is the most under-engineered stage in most delivery operations and the one we end up retrofitting into customer workflows most often. Confirm is the answer to the question: "OK, the package was pushed, but is the title actually live?"

The default state most platforms leave delivery operators in is silence. A package gets accepted at the ingest endpoint, then disappears into the platform's processing pipeline. Days later, sometimes a week later, the title shows up in the platform's catalog (or it does not). Premium SVOD platforms surface failures via portal status pages that the operator has to actively check. EMA-driven platforms surface failures via per-account email (which routes through some shared inbox by default and gets lost). FAST platforms surface failures by the title silently not appearing in the schedule.

Building the confirm loop yourself is a non-trivial integration project. Each platform exposes status differently: REST polling at one, signed webhooks at another, emailed PDFs at a third, scraped portal pages at a fourth. The pattern that works is the one where the rights system, the delivery system, and the confirmation poller all read from and write to the same record. When a confirmation event arrives, the title's state in the rights system updates automatically. When the rights system needs to know whether a title is live in a region, the answer is one query, not three.

What the lifecycle costs and what it returns

The four operators we featured in our last post all did the same thing: they collapsed the six stages onto one data model. The result, viewed lifecycle-by-lifecycle, looks like this:

The pattern is consistent. The first wave of gains comes from encode and push, the two stages that pre-existing tools already optimized for. On-demand transcoding cuts encoding time by an order of magnitude. Integrated transfer cuts push time roughly in half. These are the gains that show up in vendor pitches.

The second wave, the one that drives the actual revenue impact, comes from validate and confirm. Cutting the rejection rate from "three bounces typical" to "first-try acceptance typical" does not just save the operations time. It cuts the lag between deal close and title live, which is the lag that decides whether a release window opens on schedule. Cutting confirm-loop latency from "find out a week later" to "find out within minutes" is the difference between a platform partnership the operator can scale and one they cannot.

The reason an integrated stack delivers both waves of gains and a stitched-together stack delivers only the first is the data model. When the same record represents the title in encode, package, metadata, validate, push, and confirm, the lifecycle is one process with six stages. When each of those stages reads from a different tool, the lifecycle is six processes with overlap. Six processes do not aggregate. They multiply.

Where to start if you are inheriting a stitched-together stack

The pragmatic version of the playbook for operators who are not starting from a clean slate (which is most of them in 2026) is sequenced. We have seen this work across the case studies covered in our customer post series:

- Instrument confirm first. Before optimizing any other stage, get a single dashboard answering "what was submitted, what is ingested, what is live, what failed." If the operations team cannot answer that question in one query, none of the other gains compound.

- Unify metadata next. Pull the canonical metadata (title, EIDR, runtime, art, ratings, language list) into a single source of truth. Validate-stage rejections drop the moment downstream packaging stops re-typing fields.

- Move validate upstream. Run platform-specific validators against the package before push, not after. Most rejections at the platform are issues that an offline validator can catch in seconds.

- Replace pre-baked encoding with on-demand. Once the rest of the pipeline is integrated, the encode stage becomes the obvious bottleneck to remove.

- Tighten the push protocol mix last. Aspera, SFTP, HTTPS, MRSS — all four belong in the operation. Picking the right one per destination is a downstream optimization, not the first thing to fix.

The wrong order is the one most operations teams default to: optimize encode first because it shows up in benchmarks, then never get around to confirm because confirm does not have a vendor pitch attached to it. The order that actually matters reverses that.

Or, if you are starting from scratch and willing to pick a stack: we built ours in 2020 around the bet that the operational moat in distribution is integration, not infrastructure. The 2026 reality has been kinder to that bet than we predicted.

Internal: each stage is anchored in a prior post

- Demystifying On-Demand Transcoding (March 2024) — encode

- Streamlined Delivery: Prepopulated Templates (March 2024) — package

- MRSS and HLS Explained: A Technical Guide to FAST (February 2024) — package and push for FAST

- (Almost) Everyone Does Avails Wrong (February 2024) — validate

- The Problem with Traditional Avails Systems (November 2024) — validate

- Fast Track Your Content Delivery (April 2024) — push and confirm

- How to Deliver Packages Before Your Coffee Cools (March 2024) — push

- Content Transfer: Electrified (March 2024) — push

- Stop Pitching the Same Titles to Amazon Prime (February 2024) — validate / cross-platform

- Introducing Content Delivery as a Service (June 2020) — full lifecycle origin

- Delivering to Netflix, Apple TV+, Prime Video, and 100+ FAST channels (May 2026) — companion piece

- When We Wrote About Streaming Last January, We Made a Bet (May 2026) — financial backdrop

External technical references

- EMA Movies and Manifest specifications: entmerch.org/digitalema

- HLS specification (RFC 8216): datatracker.ietf.org/doc/html/rfc8216

- MRSS specification: rssboard.org/media-rss

- EIDR registry and identifier specification: eidr.org

Running the six stages on six different tools?

Molten Cloud collapses encode, package, metadata, validate, push, and confirm onto a single record per title. Talk to our team.