Between 2018 and 2024, content delivery quietly broke. Not in the sense that anything stopped working: deliveries kept going out, titles kept going live, distributors kept getting paid. Broke in the sense that the operational economics that made distribution profitable in 2014 stopped applying, and the industry took five years to notice.

This is the post we have been working up to for four years. The 2026 streaming revenue data, which we wrote about in our streaming revenue thesis, is the financial half of the story. The platform-by-platform delivery learnings in our last post are the operational half. This piece is the connective tissue: what actually broke, why most of the industry misdiagnosed it, and what fixed it.

When delivery worked (2010 to 2018)

For most of the streaming era's first decade, content delivery had a clean operational shape. A typical distributor served five to fifteen meaningful destinations: Netflix, Apple iTunes, Amazon Prime, Google Play, Microsoft, Vudu, a handful of regional aggregators, plus theatrical, broadcast, and cable. Each relationship was high-touch. Deals took months to close. Deliveries were bespoke per counterparty. Operations teams scaled linearly with the deal pipeline.

The tools that emerged to support that shape (encoding farms, transfer engines, QC suites, EMA validators, rights spreadsheets) were point tools by design. Each one solved a specific problem at a specific stage of the workflow. They communicated through file handoffs and email. The workflow was sequential, and the team coordinated by talking. It worked, because the workflow was sequential and the team could fit in one room.

We made a version of this argument back in 2022 in The Insatiable Demand for Content. The version that aged well was the prediction that platforms would lock themselves into a content arms race they could not afford to slow down. The version that we underestimated was how quickly the operational shape required to serve those platforms would change.

What broke (2018 to 2024)

Three things happened in roughly the same window, and each one alone would have stressed the legacy operational model. Together, they broke it.

First, platform count exploded. The five-to-fifteen-destination distributor of 2018 became the hundred-plus-destination distributor of 2026. We covered the platform-side of this in our last post. Disney+ launched. Apple TV+ launched. Peacock launched. HBO Max launched. Then the FAST and AVOD layer materialized: Tubi, Pluto, The Roku Channel, Samsung TV Plus, Freevee, Plex, Xumo, Crackle, Sling Freestream, plus regional FAST channels and OEM-specific marketplaces.

Second, specs started drifting independently. When five platforms each had a delivery spec, a delivery operations team could read all five and keep track of changes. When a hundred platforms each have a delivery spec, no team can. Apple's iTunes spec has gone through dozens of revisions. Netflix's NPP delivery handbook is a multi-hundred-page document that updates on its own cadence. Amazon Prime Video maintains separate spec families for direct-publishing, channels, and licensing. The same metadata field has different policies at different platforms: required at one, strict at another, ignored at a third. We laid out a slice of this surface in our delivery playbook post.

Third, AVOD and FAST changed the operational shape entirely. SVOD-era operations were built around discrete deliveries: build a package, push it, wait for confirmation, mark the title live. FAST-era operations are built around continuous feeds: maintain an MRSS feed, the platform polls it, schedule changes flow through. We covered the technical foundation in MRSS and HLS Explained back in 2024. What we did not say then, because the data had not yet decided, was that the FAST shape was about to become the dominant shape for the long tail of revenue.

The symptoms

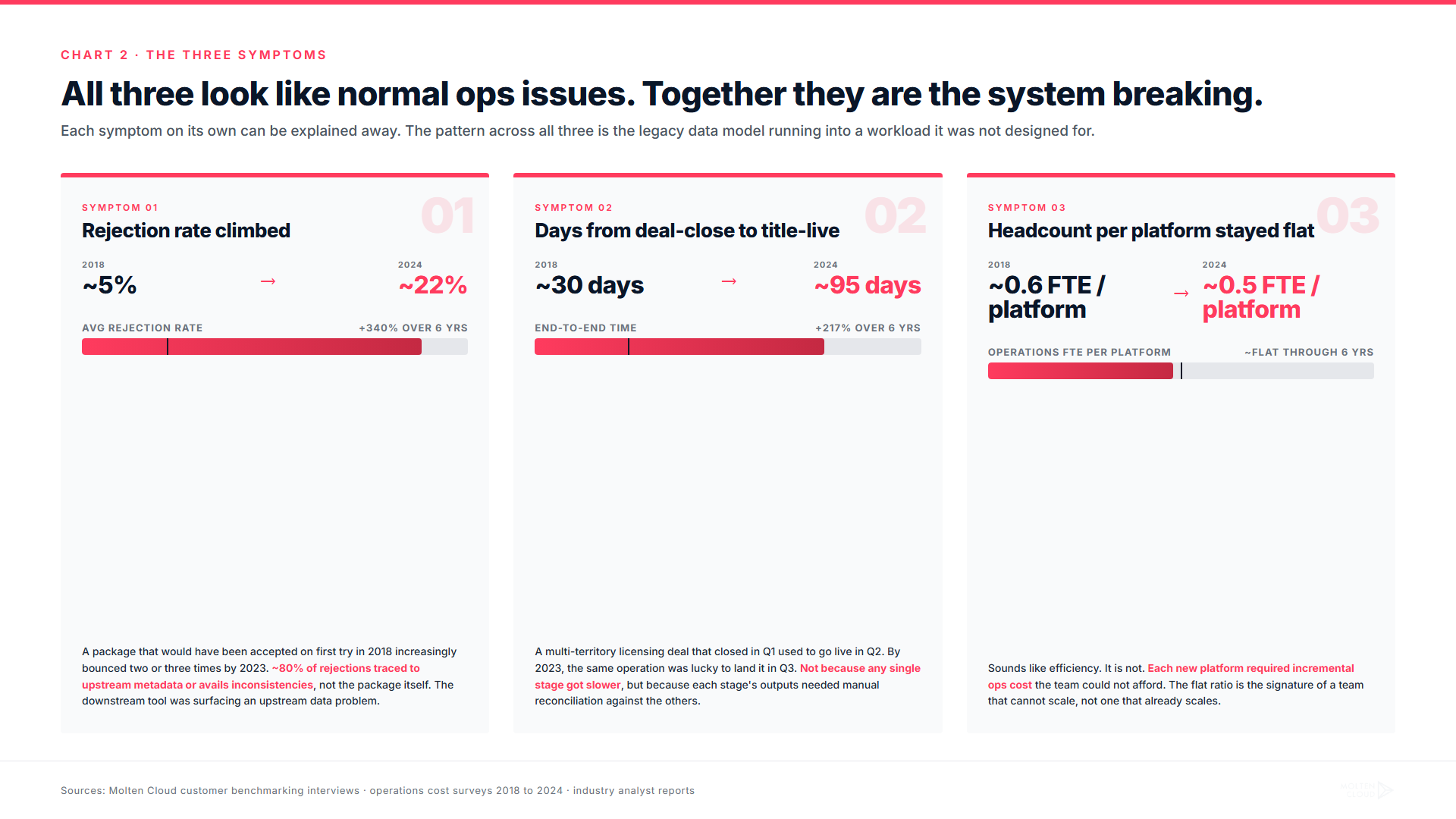

The break did not announce itself. It accumulated through a set of symptoms that each looked, on its own, like a normal operational issue. Together, they were the system breaking.

Symptom one: rejection rates climbed. A package that would have been accepted on first try in 2018 increasingly bounced two or three times by 2023. We surfaced the upstream cause in (Almost) Everyone Does Avails Wrong and The Problem with Traditional Avails Systems: the platform-side rejections were almost always downstream of metadata or avails inconsistencies that originated upstream, in tools that were not talking to each other.

Symptom two: time from deal-close to title-live crept up. A multi-territory licensing deal that closed in Q1 used to go live in Q2. By 2023, the same operation was lucky to land it in Q3. Not because any single stage got slower, but because each stage's outputs needed manual reconciliation against the other stages' outputs.

Symptom three: operations headcount per platform stayed flat. This sounds like a good thing. It is not. It is the sign of a team that cannot scale. If headcount-per-platform stays flat, that means each new platform requires its own incremental operations cost. That math broke the moment platform count crossed thirty.

The misdiagnosis

Most of the industry, faced with these symptoms, reached for a familiar diagnosis: the tooling is too slow. Buy a faster encoder. Buy a smarter transfer engine. Buy a more accurate QC tool. Add a metadata management layer. Add a delivery operations dashboard.

This was the wrong diagnosis. The diagnosis treats the symptoms, not the cause. We have watched operators add five tools over four years and end up with the same symptoms they started with, sometimes worse, because each new tool added a fresh handoff between systems and a fresh place for state to drift out of sync.

The misdiagnosis is intuitive because it is the diagnosis the vendor pitch makes easy. Every point-tool vendor's pitch is "we make stage X faster." Adding up faster stages should add up to a faster pipeline. But operations are not addition. They are coordination. Adding more tools to a pipeline that already has too many handoffs makes the pipeline slower, not faster.

The actual diagnosis

The actual diagnosis is data-model fragmentation. A title's identity in the legacy stack is fragmented across systems that do not agree on what the title is. The encoder has a file path. The metadata tool has a record with a slightly different title and an EIDR that may or may not match. The rights system has a contract row with a window that does not quite line up with what the metadata says is live. The delivery tool has a job that references the file path. The confirmation poller has an email rule that watches for a vendor reply that may or may not arrive.

When the same title is six rows in six tools, "what is the canonical state of this title right now" is not a question with a one-query answer. It is a reconciliation project. And reconciliation projects do not scale to a hundred platforms.

The fix

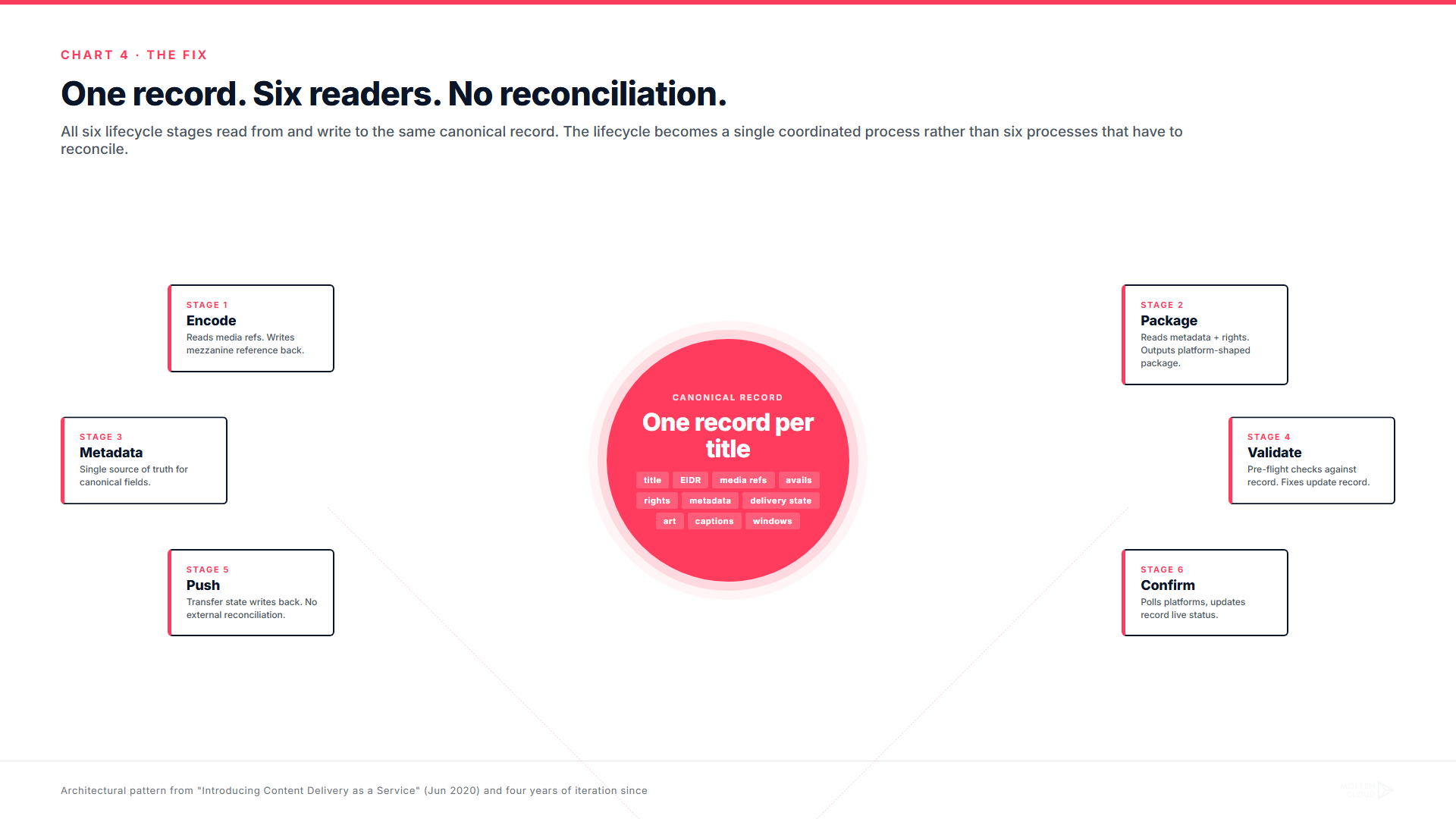

The fix is not a tool. The fix is a data model. Specifically: collapse the lifecycle onto one record per title. The same database row is the source of truth that the encoder reads, the packager reads, the metadata system reads, the validator reads, the transfer engine reads, and the confirmation poller reads. There is no inter-system reconciliation. There is one canonical state, updated as events flow through.

This is the bet we made when we launched Content Delivery as a Service in 2020. It looked, at the time, like a niche architectural choice. Most of the operators we pitched in 2020 were not actively suffering yet. Their platform count was twenty, not a hundred. Their rejection rates were tolerable. Their tools-per-stage were inherited and paid for. The argument we were making did not yet have the operational urgency the data has given it now.

Four years later, the operators who took the integrated bet early are the ones whose throughput numbers we have been publishing in case studies. The ones who took the point-tool bet are the ones replacing those tools now under operational pressure.

What the fix produced

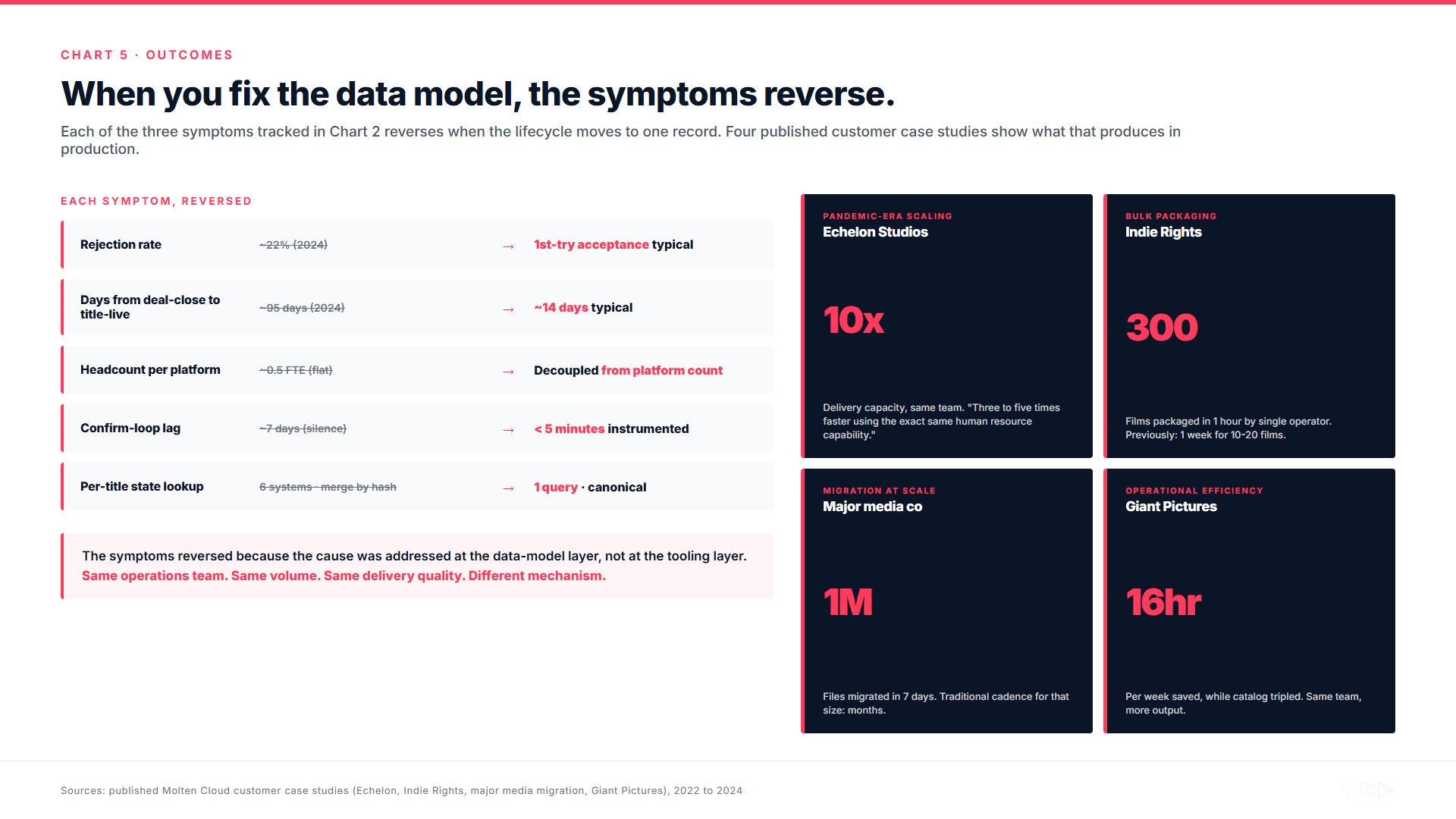

The customer outcomes track what you would expect when six fragmented data models become one. Speed gains in the stages that pre-existing tools were already optimizing (encode, push). Reliability gains in the stages those tools were not optimizing (validate, confirm). The relative size of each kind of gain matches what you see when an operations team stops spending time on reconciliation and starts spending it on delivery.

The numbers we have written about in past posts: Echelon Studios' 10x delivery capacity with the same team. Three to five times faster delivery at same headcount. Giant Pictures saving 16 hours per week while tripling the catalog. A million files migrated in seven days in an operation that traditionally would have run for months. None of these came from a faster encoder or a smarter transfer tool. All of them came from the encoder, the metadata system, the rights system, and the delivery system reading from the same record.

What this thesis has cost us to learn

This argument is not new. It has been building in this blog since 2020. We have written about single source of truth for what was pitched where. About prepopulated delivery templates as the alternative to per-platform copy-pasting. About on-demand transcoding as the alternative to per-viewer file generation. About package assembly time as a tractable problem when the data model is right. About why transfer speed depends on what is being transferred. About watermarking and security as integrated capabilities, not bolted-on plug-ins.

What changed in 2024 and 2025 is not the diagnosis. It is the urgency. The platform count finished climbing past the point where stitched-together stacks could keep up. The streaming revenue data made it clear which platforms were growing. The operators who had been on borrowed time started running out of borrowed time.

What this means in 2026 and beyond

The operational rule for the rest of the decade is short. If your delivery system, your rights system, and your royalty system are three different tools talking to each other, the long tail of FAST and AVOD will eat you. If they are one tool, the long tail is your growth engine.

The shorter version: integration is not a vendor pitch in 2026. It is the difference between a distribution operation that scales to a hundred platforms and one that does not.

The shortest version: distribution is no longer about delivering content. It is about delivering coordinated state. Operations that internalize that finish the decade with platform partnerships they can grow. Operations that miss it finish the decade replacing five point tools with five other point tools and wondering why the throughput math still does not work.

The four years we spent working up to this argument were the years we needed to be sure of it. The next four years are the years it gets cheap to be wrong about it, and expensive to ignore.

Internal: how this argument built up over four years

- Introducing Content Delivery as a Service (June 2020) — the architectural bet

- The Insatiable Demand for Content (October 2022) — the demand-side prediction

- Stop Pitching the Same Titles to Amazon Prime (February 2024) — single source of truth

- (Almost) Everyone Does Avails Wrong (February 2024) — upstream root cause

- MRSS and HLS Explained (February 2024) — the FAST operational shape

- Streamlined Delivery: Prepopulated Templates (March 2024) — alternative to manual per-platform

- Demystifying On-Demand Transcoding (March 2024) — encode-stage architectural alternative

- How to Deliver Packages Before Your Coffee Cools (March 2024) — package assembly is tractable

- Content Transfer: Electrified (March 2024) — transfer is downstream of model

- Fast Track Your Content Delivery (April 2024) — the speed claim

- The Problem with Traditional Avails Systems (November 2024) — the structural diagnosis

- When We Wrote About Streaming Last January (May 2026) — financial half of the story

- Delivering to Netflix, Apple TV+, Prime Video, and 100+ FAST channels (May 2026) — operational half

- The Complete Content Delivery Playbook (May 2026) — lifecycle reference

Replacing point tools with another set of point tools?

Molten Cloud collapses the six-stage delivery lifecycle onto one record per title. Talk to our team.